About

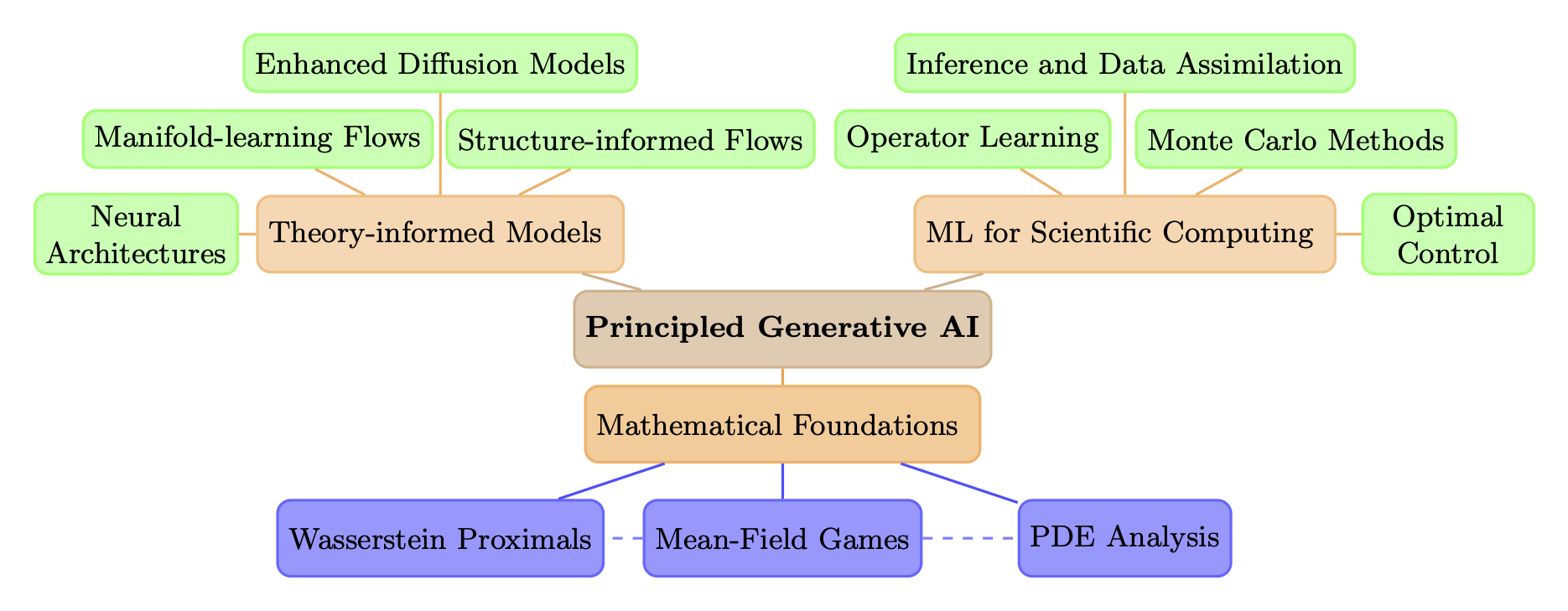

I am a postdoctoral research associate in the School of Data Science and Society at the University of North Carolina at Chapel Hill working with Amarjit Budhiraja. My research interests lie broadly in the intersections of mathematics of generative machine learning, mathematical control theory, and Bayesian computation.

I will be joining the Department of Mathematics at Rutgers University as an Assistant Professor in Fall 2026.

I was previously a postdoctoral research associate between the Division of Applied Mathematics at Brown University and the Department of Mathematics and Statistics at UMass Amherst with Paul Dupuis, Markos Katsoulakis, Luc Rey-Bellet.

I earned my PhD in Computational Science and Engineering from MIT in 2022. My advisor was Youssef Marzouk who heads the Uncertainty Quantification group. I earned my Master’s degree in Aeronautics & Astronautics at MIT in 2017, and my Bachelor’s degrees in Engineering Physics and Applied Mathematics at UC Berkeley in 2015. I was a MIT School of Engineering 2019-2020 Mathworks Fellow. I spent the summer of 2017 as a research intern at United Technologies Research Center (now Raytheon), where I worked with Tuhin Sahai on novel queuing systems.

Recent News

February

Our paper Wasserstein proximal operators describe score-based generative models and resolve memorization has been accepted to the SIAM Journal on Mathematics of Data Science!

November

New preprint on Knothe-Rosenblatt maps (triangular maps) and optimal transport: In Knothe-Rosenblatt maps via soft-constrained optimal transport, we show that the KR map can be obtained as a limit of maps solving a relaxed optimal transport problem with a soft optimal transport constraint. Our results provide theoretical jusification for variational methods that estimate KR maps by minimizing a divergence and provides new methodological ideas for new ways of constructing these maps. This is joint work with Ricardo Baptista, Franca Hoffman, and Minh Nguyen.

October

New preprint on transformer architectures: In Stability of Transformers under Layer Normalization, we study how layer normalization placement affects the stability of transformers, analyzing both the growth of hidden states and the backpropagation of gradients. Our theory explains when different placements lead to stable training and guides the scaling of residual connections for improved performance. This is joint work with Kelvin Kan, Xingjian Li, Tuhin Sahai, Stan Osher, and Markos Katsoulakis.

Recent and upcoming events

June

Scientific Computing and Differential Equations (SciCADE 2026), June 29 - July 3, 2026.

SIAM Conference on Optimization, June 2-5, 2026.

April

Data Science and Statistics Seminar, Department of Mathematics, University of Tennessee, Knoxville. April 9, 2026.

March

SIAM Conference on Uncertainty Quantification, March 22-25, 2026.

Computational and Applied Mathematics Seminar, School of Mathematical and Statistical Sciences, Arizona State University, March 16, 2026.

Level Set Collective Seminar, Department of Mathematics, UCLA, March 9, 2026.